File Distribution Systems

Machine learning, device data pushing advances, more and more information about peoples’ interests and searches gets collected and analyzed, for profit making and for human behavior analytics. Data volumes have skyrocketed. More data generated in the last two years is more than data collected in the entire human history before that.

We constantly generate data. On Google alone, we submit 40,000 search queries per second. That amounts to 1.2 trillion searches yearly! People share more than 100 terabytes of data on Facebook daily. Every minute, users send 31 million messages and view 2.7 million videos.

Surprisingly, 99.5% of collected data is never used or analyzed. So much potential go to waste! Less than 40% of the structured data collected by big co-operations gets used, the rest get backed-up to satisfy government’s retention policies.

The statistics show that revenue generated from big data is ever growing. In 2015, it was responsible for $122 billion of profits. It generated +-$190 billion in 2019 and $274.3 billion expected in 2022. Worldwide data is expected to hit 175 zettabytes by 2025, representing a 61% CAGR _(Seagate’s Jeff Nygaard, 2019). 51% of the data will be in data centers and 49% will be in the public cloud, 90 ZB of this data will be from IoT devices in 2025.

The traditional way of managing data is already out of fashion. How are you managing your organizations data today? Are you still taking piles and piles of dated backups into tapes and drives for safekeeping? Are you still doing large data restorations? Are you still rendering data over 4 – 7 useless?

In 2020, your organization needs to put your resources to better use, your organization cannot afford to have computation resource standing idle, waiting for pick days or disasters. A 1-terabyte file takes up-to 1-and-half hour to restore in an average i7 machine with 16 Gig of Ram, imagine a 200-gig file. A 200 Gig file to compress, backup, validate, copy through to a device, to validate again, to decompress, to restore into the new machine, to bring it online, all this can take up-to 4-8 days depending on many aspects; including the team of experts working on this task.

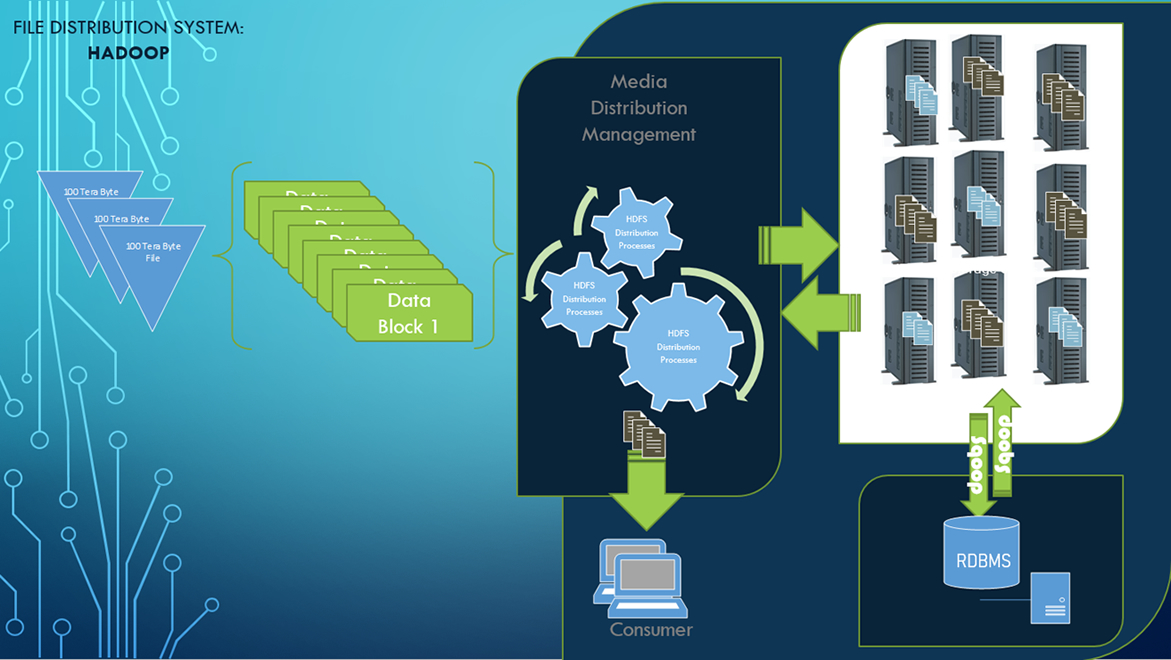

Using HDFS Kazama can put your systems resources to better use, by:

- Making sure your systems are online 24-7

- Eliminate steps of daily backups that could strangle your resources unnecessarily

- Making data available for analysis and analytics

- Distributing data wisely based user frequent usage

- Eliminate data replications that can create uncontrollable data repetitions.

Hadoop Data Architecture

Hadoop Data Architecture